What Happened to the Census?

I must preface this commentary by stating that it is always easy to assess failures after the fact; hindsight can often be the easiest way to draw conclusions to what should have been identified in the first place. And not having been involved in the census development, I am merely adding commentary as an end-user. However as a business and software analyst, there are too many questions that remain about this IT project - many of which we can learn from in future projects and projects for our own businesses. As such, I think it worthy to analyse and comment on the problems plagued by the census in 2016.

My commentary was spurred on from an opinion piece noted in News.com.au:

http://www.news.com.au/technology/online/hacking/expert-analysis-how-did-the-abs-get-the-digital-census-so-wrong/news-story/9894dba08a75d689c52d75d4cf52163c

For me some of the fundamental issues raised by the author of the post included:

- IBM (the main contractor on the census) had already been banned from participating in IT tenders in Qld due to their part in a $1.2billion health payroll debacle;

- Despite the ABS claiming a Distributed Denial of Service (DDoS) attack caused the census outage, data indicated that no significant attack occurred in Australia at that time and minor DDoS should have been expected as the normal course of any online solution;

- The ABS staff tweeted that capacity had been tested for 1,000,000 form submissions per hour. But simply mathematics would indicate that this is grossly inadequate; and

- IMB was paid $9.6M+ to develop and deploy the solution which failed. Start-ups have been able to develop more scalable and robust applications with a much smaller budget and resources.

IBM Hosting/Solution:

Beyond an IT degree, I also have strong experience and qualifications in data/statistical analysis. And I have no reservations in acknowledging that the analysis of the census data is likely to be extensive and complex. However while the analysis may be complex, the data collection should have been relatively straight-forward. In fact, the form itself resembles little more than a simple survey - with very little "conditionals" in the process and no image/intensive processing/logic required. Hence, my query as to why the census survey (at least the data collection component) was to be such a complex solution.

In the IT industry, news of hosting/platform solutions is commonplace. However I have heard little (if anything) on the merits of IBM. The landscape is currently dominated by Amazon's AWS and to a lesser extend Microsoft's Azure - both clear market leaders in the space. Why then was IBM (reportably a less tested solution) chosen as the alternative?

DDoS:

When you have a lot of windows open on your computer, your computer runs significantly slower as it tries to process requests from the applications you have open. A Denial of Service Attack works in a similar way - it tries to bog down a server/online service by making millions of simultaneous requests to the server, essentially rendering it "busy" for legitimate users. DDoS attacks can and have caused alot of issues to computing services across the globe, especially when any significant DDoS attacks are launched. Given knowledge that DDoS attacks are commonplace, this should have been something that the ABS and its contractors preempted. And, save for a catastrophic DDoS, measures should have been in place to handle DDoS attacks on the service. However in this case, there is little to no evidence to suggest that any major DDoS was launched on the census. In fact, one could argue that legitimate traffic was being mistaken for DDoS attacks simply due to mis-calculations on behalf of the ABS.

Inadequate Capacity:

Hours before its spectacular failure, ABS employees were gloating via Twitter that "The online Census form can handle 1,000,000 form submissions every hour. That’s twice the capacity we expect to need.". Yet with over 15 million respondents expected to complete the census online, simply mathematics would suggest that 1,000,000 submissions per hour was a gross under-estimate. And this doesn't even account for the fact that the census was held on a week-day; and most respondents were likely to submit their responses in the evening - post 7pm. I find this point rather ironic as the census was being held by statisticians.

IBM Reimbursement:

IMB and other companies were paid significant sums for their role in the census project. (Many of these figures can be found online.) In the wake of the census issues, many were quick to point one that $9.0 million really wasn't alot of money for the project - "it essentially only accounts for 90 full time staff for 1 year". But as it has been pointed out than many software companies have been able to support much larger user bases with a fraction of the resources - "When Instagram was acquired by Facebook, just three engineers handled 30 million users posting hundreds of millions of photos per month thanks to Amazon Web Services ... WhatsApp, an exception to the rule, used raw hardware to famously support more than 900 million users with only 50 engineers.". So why did the census require such a significant investment and the question remains - was there any portion of the fee based on success of the census?

Australia has a great deal of technology talent. Companies at all levels have produced brilliance in technology. The census was an opportunity to make a great advancement in technology and convince a wider audience in Australia of the benefits of online solutions - and that opportunity has been lost. We still do not know why the census was plagued with issues, but there remains some serious questions that should be resolved.

I for one hope that we learn from the mistakes of the census and look to a better capitalise on technology processes in the future. The census could have been executed well. And we hope that the next large technology project for the government is provided with adequate scrutiny; management and resources to deliver on its promises.

DCODE GROUP is a software consultancy working with businesses to adopt technology solutions into their day-to-date processes. We specialise in "systematizing" business processes using technology - looking to identify re-curring processes in your business; define those processes; and then use technology to help you replicate, deliver and manage those processes. We look to understand your business and then develop solutions that meet your requirements and assist you in delivering your differentiated offering. Contact us to find out more about how we can assist you in developing custom software for your business.

--

Get updates, tips and industry news delivered directly to you

Decode technology with Dcode

Stay in the loop with everything you need to know.

We care about your data in our privacy policy.

Related reading from Dcode

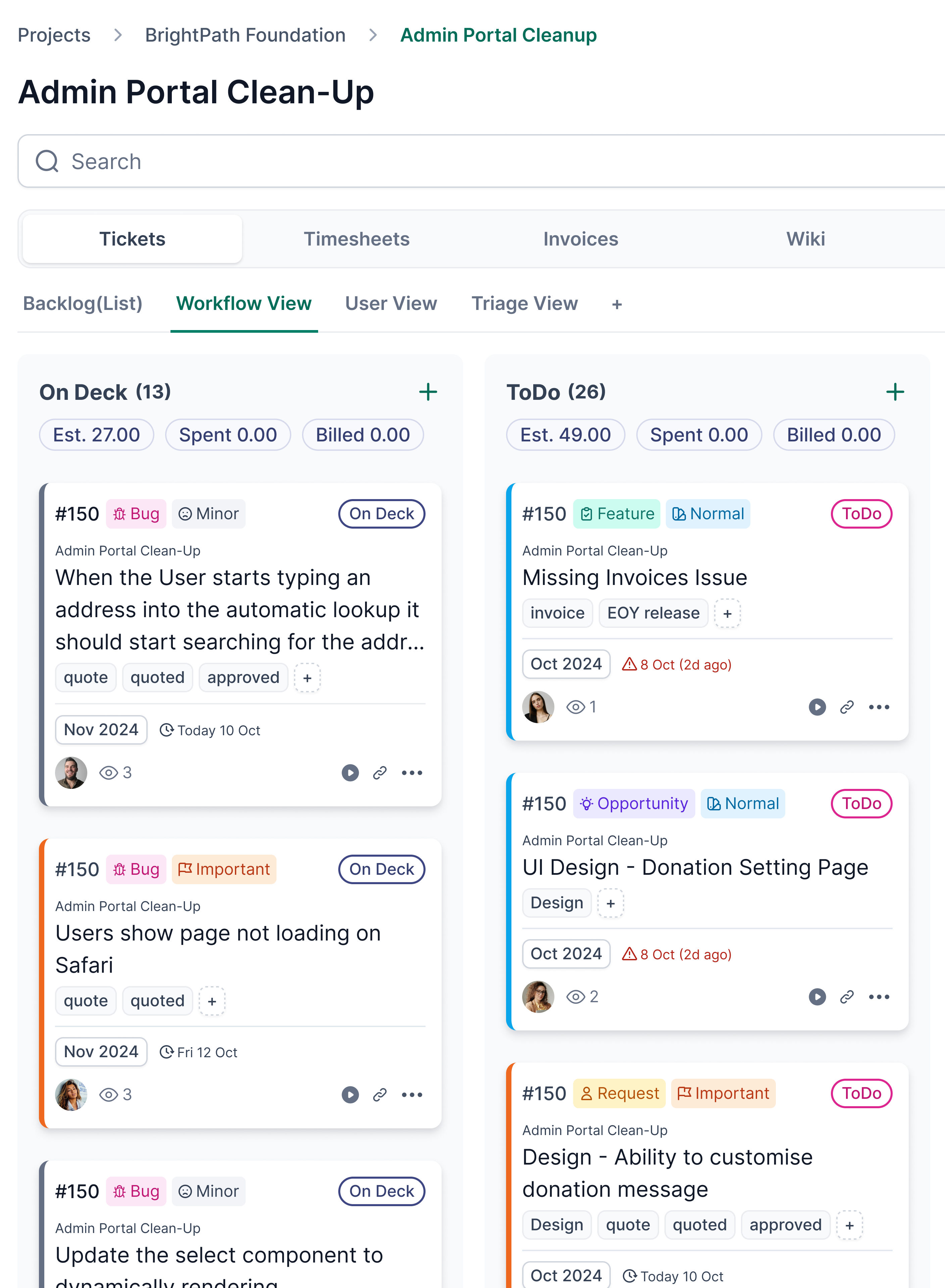

The Hidden Cost of Too Many Tools in Your Agency: Why We Built Kanopi

From Whiteboards to Workflows: How Modern Scheduling Systems Help Businesses Grow

Building a Global Team: How One Exceptional Hire Shaped Dcode’s Vietnam Office

From TradeGecko to Our Own: How Dcode Group Built a New Inventory Management System